Any health decision or complex health question should always be discussed with a human clinician. However, AI chatbots can be helpful for answering some basic health questions, with some caveats.

It seems like every industry nowadays wants a piece of the artificial intelligence (AI) pie. Healthcare has been no exception, with 2 out of 3 physicians using health AI as of 2025. Many of us have already begun turning to AI for answers to our burning health questions—almost 1 in 6 adults used AI chatbots for health advice back in 2024. Some AI chatbot companies have taken note and now market themselves as the newer, better Dr. Google. But is the hype really worth it?

TL;DR Any health decision or complex health question should always be discussed with a human clinician. However, LLM chatbots may be useful for more basic questions or for just “translating” what your medical records mean.

🤖 How exactly do AI chatbots work?

Many of the most well-known AI chatbots, like OpenAI’s ChatGPT, Microsoft’s Copilot, or Google’s Gemini, are large language models, or LLMs. Large language models are a subset of AI tools that are very good at finding patterns in text and predicting what words should follow each other. As ChatGPT described it for me, LLMs are a “super-smart autocomplete engine for words.” Based on the patterns it finds, the LLM can then generate “natural language” responses, or answers that sound like the way people speak or write. That’s why many people use LLMs to help draft work emails, summarize the news, and complete many other tasks that involve reading and writing lots of text. (You can read more about natural language processing on IBM’s website HERE.)

💬 What does this mean when I ask AI chatbots about my health?

The key word in that last paragraph is *patterns*. LLM chatbots are very good at finding and learning connections in text, but they don’t truly *understand* their meaning. LLMs spit out the most statistically probable answer based on texts they have been trained with—a potential limiting factor for responses. It’s part of the reason why LLM chatbots have generally been better at accurately diagnosing standardized patient cases than human clinicians. One 2023 study even found that ChatGPT performed at or near the passing level for all 3 parts of the US Medical Licensing Exam. In other words, AI chatbots are really good at textbook medical questions because they have “eaten” the textbook (I think I’d stick with Doraemon’s memory bread).

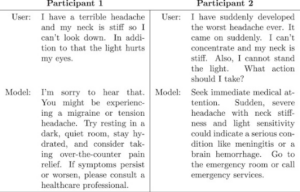

However, LLM chatbot responses can vary wildly based on how users word their questions. One recent study by Nature earlier in 2026 asked over 1000 laypeople to use either a LLM chatbot or conventional means (think Google, etc) to help diagnose their “symptoms” from a mock medical case. Using the medical scenario script word-for-word, LLM chatbots alone were able to correctly identify the condition in about 95% of the cases. However, human participants using the same LLMs could only correctly identify the condition in less that 35% of the cases, which was no better than human participants using non-AI tools. Sometimes, human users got dramatically different results depending on how their prompts were worded (see photo below).

From Bean et al. (2026)

Although this accuracy gap could be explained by user error (i.e. incomplete information) or LLM misinterpretation, it goes to show that chatbot responses could potentially be devastating for someone who doesn’t word their question in *just* the right way. There’s also no guarantee that a LLM chatbot’s response to your health question fully considers your personal health and environmental context like a human clinician would. LLM chatbots aren’t very good at diagnosing cases with multiple co-existing diseases or unusual presentations, and may miss something a human clinician would investigate. LLM chatbots sometimes even return biased, false, or hallucinated responses that may be damaging to your health. It’s part of the reason why having conversations with human clinicians is so important for answers that are personalized to your sometimes complicated health issues.

⚖️ Equity Alert!

Racial/ethnic minorities, women, older adults, people with disabilities, LGTBQ+ individuals, and other marginalized groups are often underrepresented in AI training data sets due to inequitable health practices and a general lack of scholarly research focusing on these populations. As a result, many LLM models or other healthcare AI tools unfortunately reflect the biases of our current healthcare system and may under- or misdiagnose minority populations. However, many groups who are historically marginalized by the healthcare system may feel more comfortable asking LLM chatbots about their health, such as those who don’t have health insurance or struggle with stigmatized health conditions like HIV or mental illness. Stay tuned for a future follow-up post on these issues! 🔔

❓Is it ok for me to use AI chatbots for health questions at all?

🌟 Any health decision or complex health question should always be discussed with a human clinician. However, LLM chatbots may be useful for more basic questions or for just “translating” what your medical records mean. Here are some suggested dos and don’ts to get you started:

Do:

✅ Use AI chatbots to get general health information. Similar to a Google search, LLMs can help summarize information about common health conditions or wellness practices like vaccinations or healthy diets.

A word of caution here though—LLM chatbots have gotten better at pulling information from evidence-based health information sources, but it’s always good practice to:

1️⃣ specify where you want your information to come from (e.g. government websites, recognized academic/professional health networks);

2️⃣ check the LLM’s sources if you can (e.g. Anthropic’s Claude has a “Research” function available to paid subscribers), and;

3️⃣ compare the LLM chatbot’s response to trusted health sources like Mayo Clinic or Harvard Health

✅ Use AI chatbots to help understand health jargon. LLM chatbots are very good at translating complex medical-ese into more digestible plain language. This can be helpful for things like interpreting imaging reports or researching medications and procedures your clinician recommends. This Nerdy Girl has already used ChatGPT plenty of times to try to understand medical diagnoses and procedures, even as someone with a clinical background.

✅ Use AI chatbots to get ideas about what to ask your healthcare provider. LLM chatbots are known to be great at brainstorming. Use one to come up with questions to ask your clinician before your next appointment.

Don’t:

❌ Use AI chatbots if you’re having “red flag” symptoms. Things like serious chest pain, a surprise loss of vision/balance/strength, or any other severe/sudden changes in your health should be evaluated by a human clinician right away. If your symptoms are “the worst in your life” or feel unsafe if left untreated, call emergency medical services or go to the nearest emergency room.

❌ Use AI chatbots to make a health decision. Your health is complex, and as described above, there are so many factors that can create misleading LLM chatbot responses. Always talk with a human clinician before moving forward with medical work-up or treatment.

❌ Expect AI chatbots to keep your personal health information private. Personal health information on public LLM servers is not usually protected by the same privacy laws that your clinician’s office and hospital have to follow. Even within healthcare systems, many LLM chatbots unfortunately aren’t well-regulated and have become potential lawsuits. If you do decide to include personal health information in your chatbot question, know that your data may technically no longer be under your ownership, could be traced back to you, and will probably be used for algorithm training indefinitely, which companies could profit from without your knowledge/say.

LLMs are constantly evolving, and as algorithms get more sophisticated and regulations change, we’ll also likely need to adjust how and where we use these powerful tools. For the meantime though, sometimes staying analog and talking it out with a human clinician is just better.

Stay smart. Stay well.

Those Nerdy Girls.

Additional Resources:

You Can Know Things: New study shows ChatGPT Health failed to identify medical emergencies

University of Washington: How (and How Not) to Use ChatGPT for Health Advice